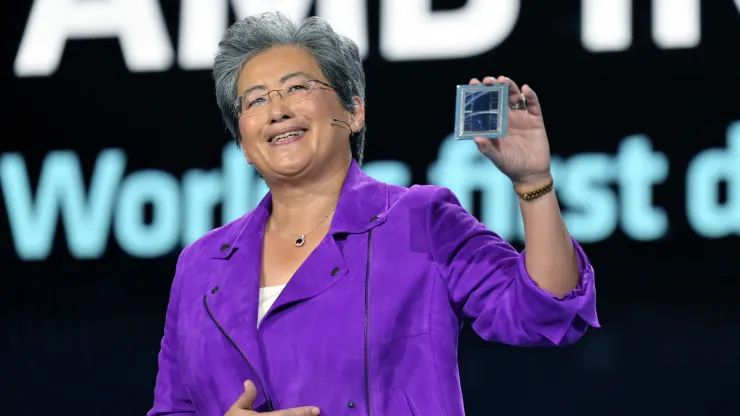

Meta, OpenAI, and Microsoft said at an AMD investor event Wednesday they will use AMD’s newest AI chip, the Instinct MI300X. It’s the biggest sign so far that technology companies are searching for alternatives to the expensive Nvidia graphics processors that have been essential for creating and deploying artificial intelligence programs such as OpenAI’s ChatGPT.

If AMD’s latest high-end chip is good enough for the technology companies and cloud service providers building and serving AI models when it starts shipping early next year, it could lower costs for developing AI models and put competitive pressure on Nvidia’s surging AI chip sales growth.

“All of the interest is in big iron and big GPUs for the cloud,” AMD CEO Lisa Su said Wednesday.

AMD says the MI300X is based on a new architecture, which often leads to significant performance gains. Its most distinctive feature is that it has 192GB of a cutting-edge, high-performance type of memory known as HBM3, which transfers data faster and can fit larger AI models.

Su directly compared the MI300X and the systems built with it to Nvidia’s main AI GPU, the H100.

“What this performance does is it just directly translates into a better user experience,” Su said. “When you ask a model something, you’d like it to come back faster, especially as responses get more complicated.”

The main question facing AMD is whether companies that have been building on Nvidia will invest the time and money to add another GPU supplier. “It takes work to adopt AMD,” Su said.

AMD on Wednesday told investors and partners that it had improved its software suite called ROCm to compete with Nvidia’s industry standard CUDA software, addressing a key shortcoming that had been one of the primary reasons AI developers currently prefer Nvidia.

Price will also be important. AMD didn’t reveal pricing for the MI300X on Wednesday, but Nvidia’s can cost around $40,000 for one chip, and Su told reporters that AMD’s chip would have to cost less to purchase and operate than Nvidia’s in order to persuade customers to buy it.

Who says they’ll use the MI300X?

On Wednesday, AMD said it had already signed up some of the companies most hungry for GPUs to use the chip. Meta and Microsoft were the two largest purchasers of Nvidia H100 GPUs in 2023, according to a recent report from research firm Omidia.

Meta said it will use MI300X GPUs for AI inference workloads such as processing AI stickers, image editing, and operating its assistant.

Microsoft’s CTO, Kevin Scott, said the company would offer access to MI300X chips through its Azure web service.

Oracle’s cloud will also use the chips.

OpenAI said it would support AMD GPUs in one of its software products, called Triton, which isn’t a big large language model like GPT but is used in AI research to access chip features.

AMD isn’t forecasting massive sales for the chip yet, only projecting about $2 billion in total data center GPU revenue in 2024. Nvidia reported more than $14 billion in data center sales in the most recent quarter alone, although that metric includes chips other than GPUs.

However, AMD says the total market for AI GPUs could climb to $400 billion over the next four years, doubling the company’s previous projection. This shows how high expectations are and how coveted high-end AI chips have become — and why the company is now focusing investor attention on the product line.

Su also suggested to reporters that AMD doesn’t think that it needs to beat Nvidia to do well in the market.

“I think it’s clear to say that Nvidia has to be the vast majority of that right now,” Su told reporters, referring to the AI chip market. “We believe it could be $400 billion-plus in 2027. And we could get a nice piece of that.”